Democraticizing And Scaling Research

ROLE

Sole UX Researcher supporting Design and Product

TIMELINE

Ongoing since early 2026

Interactive research playbook built entirely using AI, office hours, 1:1 support, AI experimentation

SCOPE

Positive reception from design leadership and stakeholders. Adoption tracking in progress

IMPACT

Key Outcomes & Decisions

Defined and implemented a system to scale qualitative research across the organization as the sole researcher, enabling broader team participation

Built an AI-powered interactive research assistant and playbook that standardizes study design, recruiting, and execution, without requiring any support

Established guardrails to ensure speed from AI and self-service research did not compromise methodological rigor

Shifted stakeholder engagement from ad hoc support to structured enablement, allowing deeper, more strategic research collaboration

The Situation

Through a combination of layoffs and departures, Constant Contact's research team had been reduced to one. I was the sole qualitative researcher supporting the product organization. At the same time, leadership was pushing for more qualitative research across the product, faster timelines, and broader use of AI. Designers and PMs were interested in running their own studies, and some had already started to, but most didn't have access to the research tools and didn't have a shared set of practices to follow.

The need was clear: if research was going to scale beyond one person, there had to be a foundation in place. Not just tool access, but guidance on methodology, study design, recruiting, and analysis, the kind of structure that helps people produce research they can trust even when they're doing it for the first time. Research that isn't done well can still look convincing. A study can return clean results from leading questions or a narrow sample, and the problems only surface after decisions have already been made. Setting up best practices early was about making sure the organization's growing interest in qualitative research would lead to insights they could act on with confidence.

How I Worked

Process and tools

Before enablement became a dedicated initiative, I was already helping stakeholders one-on-one: reviewing test plans, giving feedback on scripts, and answering questions about recruiting. That support was valuable, but it didn't scale. Each conversation helped one person with one study.

One of the key pieces I own is an interactive research playbook, a self-contained web tool I built entirely in Cursor without any engineering support. It's structured around a methodology guide that helps people figure out the right approach for their study, and a step-by-step checklist that walks them through execution: script templates with inline guidance on question structure, recruiting playbooks with email and screener templates, and a timeline planner that maps research phases to business days. Every component is conditional, adapting to the specific combination of study type, audience, and format the user selected.

The playbook is still early, and the broader impact is something I expect to see over time. The goal is that it handles the foundational questions so that when stakeholders do connect with me, the conversation can go deeper.

AI in my research practice

This is one of the most exciting parts of the work right now. The tools available to researchers are changing quickly, and figuring out how to use them without compromising rigor is a challenge I'm genuinely enjoying.

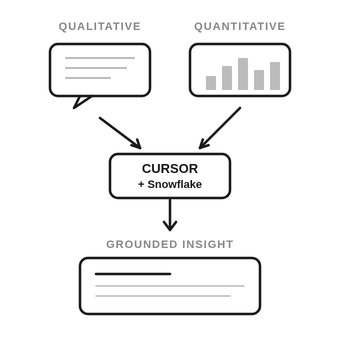

I use Cursor daily across my research practice. Because it connects to our Snowflake database, I can query usage data directly, adding a quantitative dimension to my work that wasn't as accessible and quick before. If a study surfaces a behavior pattern, I can check whether it shows up in the product data within minutes. That feedback loop has made insights more grounded and more persuasive.

Leadership is actively investing in AI tools across the organization, and we're looking into platforms that offer AI-moderated interviews. As the person with the qualitative research expertise on the team, I'm the one assessing these tools, running studies through them and evaluating whether the output holds up against what a skilled moderator would produce. The playbook addresses the process constraint. AI-moderated tools could address the time constraint.

Principles Behind The Approach

Meeting people where they are

Stakeholders think in terms of questions they need answered, not methodologies. Enablement has to start from their context, whether that's in a tool, a template, or a conversation.

Knowing what to own and what to enable

Not every study should be self-service. That line shifts depending on the study, the stakeholder, and the tools available, and part of the work is continuously reassessing where it should be.

Continuous iteration

What people need changes as they get more comfortable and as new tools become available. Everything so far is a first draft, built to evolve.

The Challenges

Speed vs. rigor

Leadership wants research to happen faster, and AI tools are creating real opportunities for that. But speed without rigor produces findings that feel actionable but aren't. The challenge is knowing where AI can accelerate the process without compromising quality, and being willing to push back when it can't.

Being the only researcher and deciding what to prioritize

Research enablement doesn't replace research work. I'm still running studies, delivering findings, and supporting multiple product areas. Every hour spent building the playbook or evaluating a new tool is an hour not spent on a study. That tradeoff requires constant recalibration, and my priorities shift depending on what the organization needs most at any given moment.

Where It’s Going

This effort is still evolving, and the real measure of success will be whether people run studies independently and produce research that holds up.

There's a useful parallel already happening on the quantitative side of the organization. Data analysts used to be the only ones answering detailed data questions. With tools like Cursor connected to Snowflake, that's changed. More people across the org can query data directly, while analysts have shifted toward connecting new data sources, answering more complex strategic questions, and clearing roadblocks. They're still essential, but their role has evolved. I see qualitative research heading in a similar direction: stakeholders running their own studies for well-defined questions, while researchers focus on the bigger picture, complex multi-method work, and continued enablement.

I'm optimistic about where this is going. AI-moderated interviews, better tooling, and a growing comfort with research practices across the team are all converging. The goal is to make sure the foundation is solid so the organization is ready to move when the tools catch up.